artificial intelligence

Need Gift-Buying Advice for That Special Someone? Our AI SantaBot is Here to Help

Published

5 months agoon

By

admin

If you’ve been riding the wave of Bitcoin’s latest surge or watching your crypto wallet grow, the holidays might feel like the perfect time to turn those gains into memorable gifts.

But as any seasoned gifter knows, a big price tag doesn’t always guarantee a big impression.

That’s where SantaBot—our AI agent experiment designed to help you step up your gift-giving game—can help.

The project started with a simple question: Could AI decode personal chat messages to suggest more meaningful presents to your friends and loved ones? As it turns out, it can.

Designed to take the guesswork out of giving, SantaBot digs through conversation histories to build detailed personality profiles, gathering insights about their habits, preferences, and other aspects.

It even maps out Myers-Briggs and Enneagram personality types based on how people talk to each other.

The tool provides creative personalization ideas to make each gift truly unique.

For instance, you could gift your son a baseball bat engraved with his uniform number and initials or surprise your wife with a smartwatch featuring straps in her favorite color. These small, thoughtful touches can make a big impact.

Hands-On

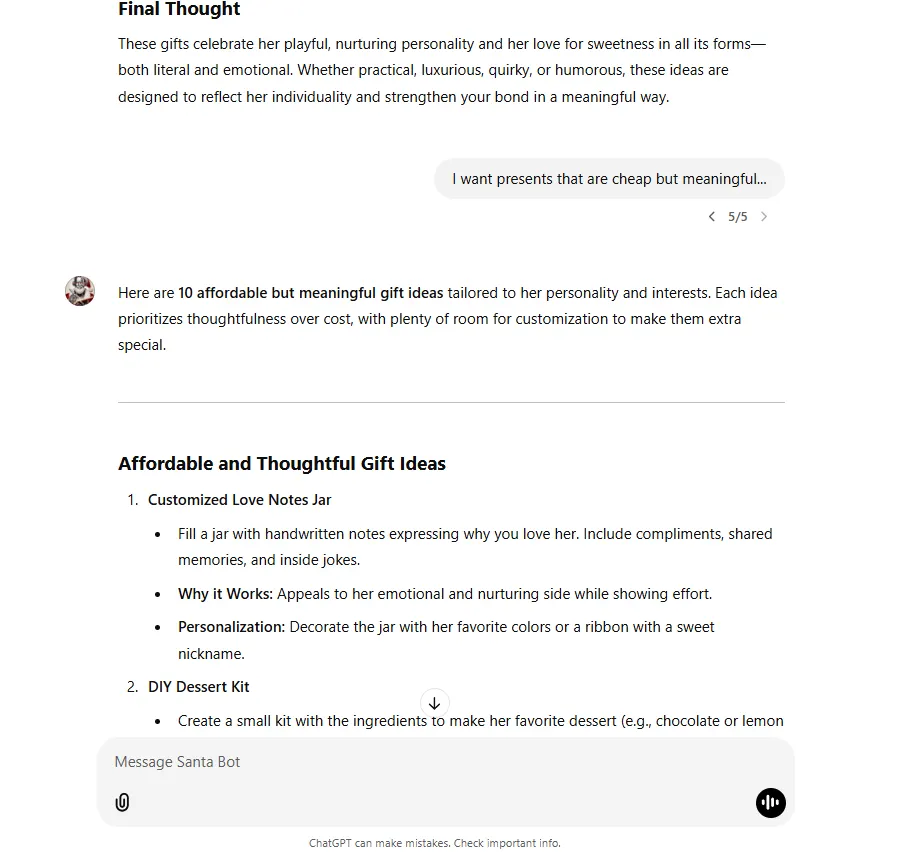

Before giving my bot to the world, I decided to give it a try. I uploaded my WhatsApp conversation with my wife and asked her to tell me the best gifts I could give her.

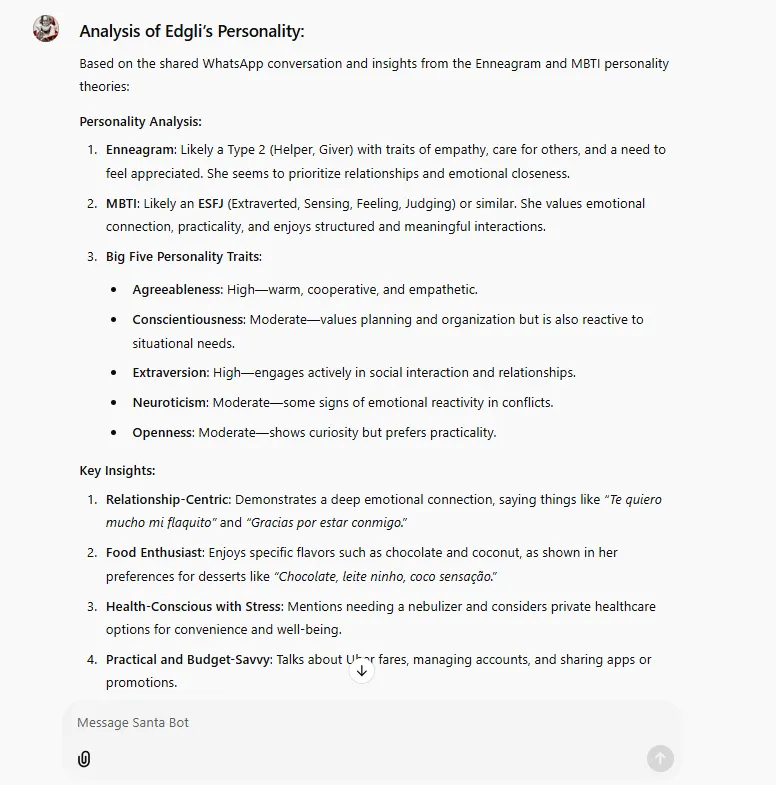

SantaBot psychoanalyzed my wife’s WhatsApp messages, and folks, we’ve got ourselves a certified Type 2 personality (Helper, Giver) with a major love for desserts and fitness.

According to the bot, she’s what personality experts call an ESFJ (Extraverted, Sensing, Feeling, Judging), basically someone who’d organize a group hug and then make sure everyone filled out a satisfaction survey afterward.

The personality analysis shows she ranks high in agreeableness and extraversion, moderate in neuroticism (their words, not mine—I value my life), and has a practical streak that somehow doesn’t apply to transportation choices.

The bot didn’t just stop at basic personality traits. It went full CIA analyst on our conversations, noting some interesting things like her use of “Te quiero mucho mi flaquito” (translation: “I love you, my skinny one”) to her appreciation for little details instead of luxurious things.

SantaBot even picked up on her Uber addiction faster than our credit card company.

It painted a picture of someone who’s health-conscious but won’t walk two blocks if there’s a car service available—which is not 100% but is easy to infer if the only thing you know about her is our conversation history.

Now, for the gift suggestions, these were some of the most exciting picks.:

For the practical side:

- A Miniature Chocolate Fountain with a customized base that says “Edgli’s [her nickname] Sweet Spot.” (considering she showed interest in buying one for future events)

- An “Uber Survival Kit” with a prepaid card (cheaper than buying her an actual car) or a mug with “Boss of Uber Requests” printed on it.

- A literal vault for her chocolate stash with “Keep Out, Unless You’re Amorsito” engraved on it—so I stay away from it.

For the fancy pants moments:

- A custom box with desserts from Venezuela and Brazil.

- A spa kit named “Aromas de Edgli” (much fancier than “Smell Like My Wife”).

- A leather planner embossed with “Amorsito’s Plans.”

- A Star Map Print showcasing the constellations of a meaningful date, like the day we met or the day our daughter was born.

And for when money is no object:

- A smartwatch to help her keep track of her fitness activity and burn calories.

- A designer handbag with her initials embossed.

- A weekend getaway featuring a chocolate-tasting experience in Gramado (basically a desert safari in one of Brazil’s best tourist places).

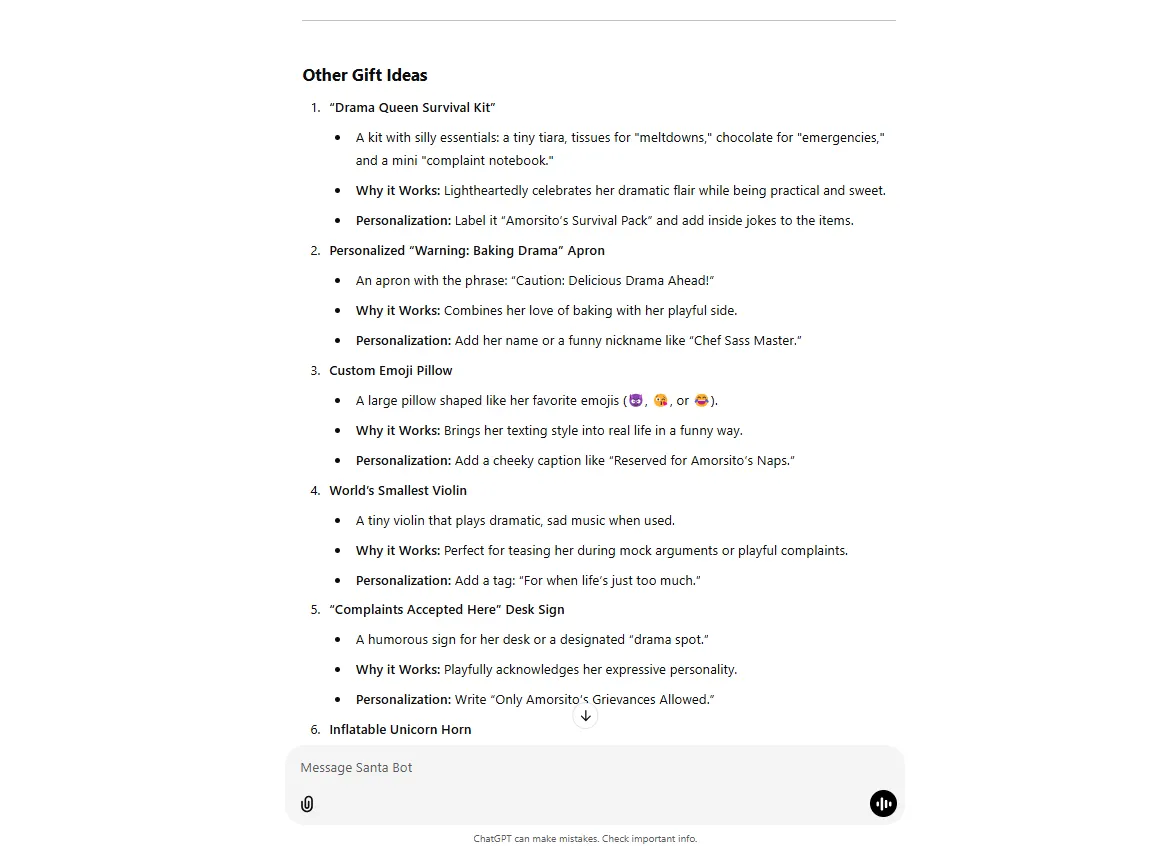

It also recommended some funny gift ideas, including a “Drama Queen Survival Kit” (which she would hate), a “Custom Emoji Pillow” (which she would love) and a personalized apron with a nickname like “Chef Sass Master”

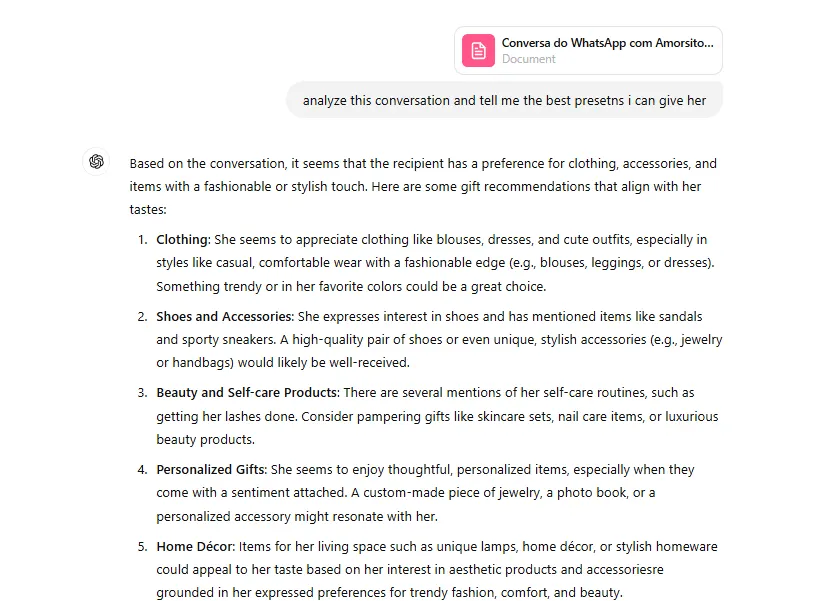

I compared SantaBot head-to-head against regular ChatGPT to see how it stacked up.

The difference was clear—while standard ChatGPT played it safe with generic suggestions, our specialized version picked up on subtle hints.

It’s not like its suggestions were useless, rather than less personal.

How to Get Santa Bot’s Help

To use our tool, you must upload your conversation history and interact with the model, asking for recommendations.

You can then go with follow-up questions, asking for more suggestions, personalization ideas, providing more contextual or personalized information, etc. The more information the AI handles, the better the results should be.

Some good starting prompts can be as simple as “Please carefully analyze this conversation and tell me what presents she/he would like” to things as complex as “What are the best presents I could give to a person with an ENFP type of personality.”

You can also play with the tool and iterate with it. Once it provides a reply, you can ask for more suggestions, ask for funnier recommendations, ask for more romantic gift recommendations, etc. It all depends on your intentions and expectations.

Exporting chats is pretty straightforward, depending on which messaging app you use.

WhatsApp users can export chats from the app, though iMessage folks need to use tools like iMazing to get their conversation data. Similar options exist for Telegram, Facebook, Instagram, and TikTok users. Just google them.

Also, ensure you only upload text conversations, so export your data without photos, voice notes, or documents.

This, of course, means there are privacy concerns that you should address. SantaBot requires access to those conversations to create its detailed profiles.

Sharing such personal data without permission could be unethical. The fix isn’t perfect, but it works: Ask the other person for permission to use the conversation for an AI experiment. If they agree, you’re good to go.

If you don’t want to go that route, you can take other steps.

First, names should be anonymized in exported chats by replacing them with placeholders. For this, open your TXT file, select the option to edit and replace text (this will vary according to your text processor), and choose to change the name for the placeholder in every instance. Save that file and upload it to ChatGPT.

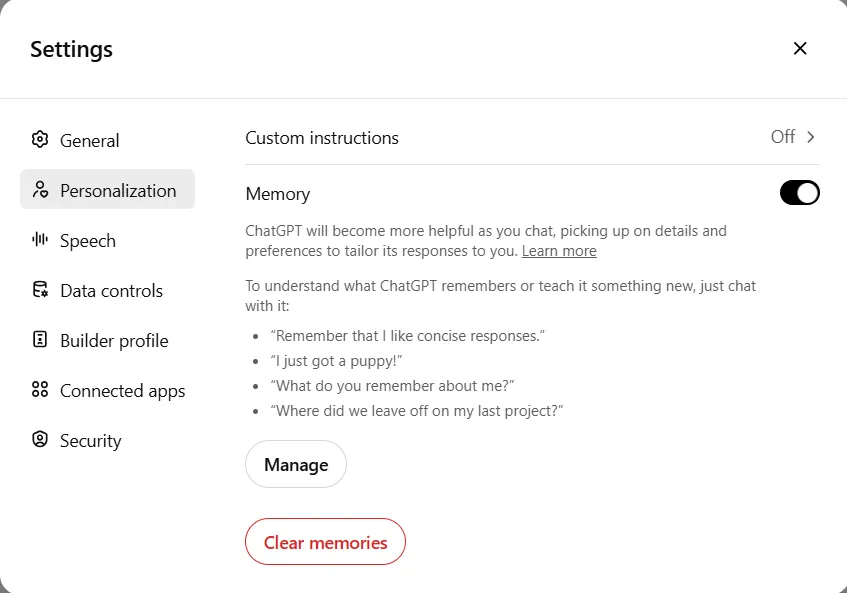

Second, ensure OpenAI cannot use that chat to train its models. For that, the first thing you can do is adjust your ChatGPT settings to disable memories. To do so, click on your profile picture in the top right corner of ChatGPT, go to settings, personalize it, and turn off “Memory.”

Alternatively, you can click on “Manage” after your conversation is done and delete any memory that could be created mentioning your latest chat.

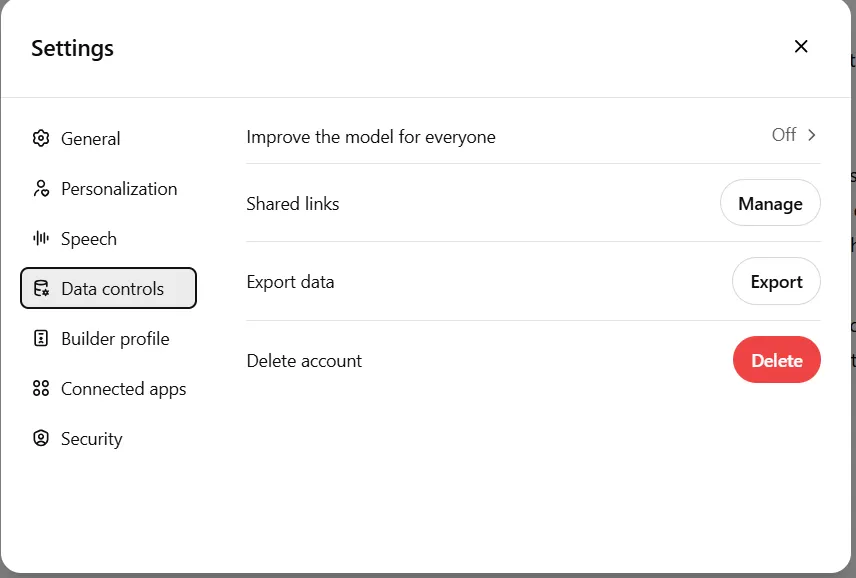

Additionally, you can prevent OpenAI from training its model with your conversation by blocking the capability of using your data—which is allowed by default.

To change that, go to Settings, click on Data controls, and turn off the option “Improve the model for everyone.” This sounds pretty, but in non-corpo language, it can be translated as “Let OpenAI use your conversations to train its models for free and probably charge you more once they get more powerful.”

Overall, building GPTs and specialized agents can bring practical solutions to everyday challenges, like the art of gifting.

Our AI may surprise you with clever ideas that turn ordinary presents into unforgettable gestures so you can be as successful in your family reunions as you think you are trading crypto.

At the very least, when the presents miss the mark, you’ll have something better to blame than your lack of creativity.

Edited by Sebastian Sinclair

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.

Source link

You may like

Crypto markets ‘relatively orderly’ despite Trump tariff chaos: NYDIG

Fartcoin ‘Hot Air Rises’ — $1.50 Just A Whiff Away

What happened to the RWA token?

Crypto Strategist Sees Solana-Based Memecoin Surging Higher, Says One AI Altcoin Flashing Strong Chart

Mantra Team Responds As The OM Token Price Crashes Over 80% In 24 Hours

This Week in Crypto Games: Gaming Tokens Crash Out, Eve Frontier Opens Up

artificial intelligence

Where Top VCs Think Crypto x AI Is Headed Next

Published

17 hours agoon

April 13, 2025By

admin

The proliferation of mainstream artificial intelligence (AI) tools in the last couple of years has stirred the crypto and blockchain industry to explore decentralized alternatives to Big Tech products.

The synergy between AI and blockchain is built on addressing the risk of centralized ownership and access to data that powers AI. The theory goes that decentralization can mitigate against the entire AI economy being powered by the data owned by a few tech behemoths like Alphabet (GOOG), Amazon (AMZN), Microsoft (MSFT), Alibaba (9988) and Tencent (0700).

It is unclear as yet whether or not this will prove to be a significant problem at all, much less whether the blockchain industry will be able to solve it. What is clear, however, is that crypto venture capitalists (VCs) are willing to spend millions of dollars finding out. Decentralized AI has thus far attracted $917 million in VC and private equity money, according to startup deal platform Tracxn.

The question remains whether the trend of investing in blockchain-based AI is still built on hype or has now transcended to being the real deal.

Blockchain investment company Theta Capital described AI x crypto as “the inevitable backbone of AI,” in a recent “Satellite View” report, which explored insights and outlooks from the sector’s prominent investors.

AI agents

“No trend stands out more than the intersection of AI and crypto,” the report said, using the examples of AI agents trading on blockchains and even launching tokens.

This may appear to be a more sophisticated form of speculation for degens, but Theta argues it’s a route to tackling some of AI’s problems that only crypto can solve.

“Crypto wallets enable the participation of autonomous agents in financial markets,” according to the report. “Decentralized token networks are bootstrapping the supply side of key AI infrastructure for compute, data and energy.”

The report’s conclusion is far from being hype and speculation; AI x crypto is “the new meta.” Meta is short for “metagame,” a term borrowed from gaming referring to the dominant way of playing with regard to characters, strategies or moves based on the competitive landscape.

Decentralized AI

Alex Pack, managing partner of blockchain venture capital firm Hack VC, described Web3 AI as “the biggest source of alpha in investing today,” in the “Satellite View” report.

Hack VC has dedicated 41% of its latest fund to Web3 AI, according to the report, in which it sees the main challenge as building a decentralized alternative to the AI economy.

“AI’s rapid evolution is creating massive efficiencies, but also increasing centralization,” Pack said.

“The intersection of crypto and AI is by far the biggest investment opportunity in the space, offering an open, decentralized alternative.”

One of Hack VC’s most prominent portfolio companies is Grass, which encourages users to participate in AI networks by offering up their unused internet bandwidth in return for tokens.

This is designed as an alternative to large firms installing software code into apps in order to scrape their users’ data.

“Users unwittingly donate their bandwidth without compensation,” Grass founder Andrej Radonjic said in Theta’s report.

“Grass provides an alternative [by] forming a massive opt-in, peer-to-peer network able to produce high-quality data at the scale of Google and Microsoft.”

The dreaded AI “takeover”

Decentralized AI presents risks for investors, Theta concedes. It could lead to the proliferation of all the least desirable facets of the internet as it already exists: putrid online discourse, spam emails or vapid social media content in the form of blogs, videos or memes. In the crypto world, an example of this may be the creation of meme tokens. The questionable endorsements, the wash trading and the pump and dumps can all be handled by AI engines even more efficiently than humans.

Some VCs see blockchain as the basis for mitigation. Olaf Carlson-Wee, CEO and founder of Polychain, provided the examples of proof-of-humanity mechanisms to verify that users are human and disincentivizing spam through micropayments or spam.

“If sending an email costs $0.01, it would destroy the economics of spam while remaining affordable for average users,” he said in the report.

With blockchain possibly providing some of these safeguards, Carlson-Wee believes AI will underpin digital and financial systems, as they could outperform humans in markets. This reality, he claims, would be gladly accepted, as opposed to dreaded as some sort of bleak dystopia.

“Over time, AI systems will evolve into long-term capital allocators, predicting trends and opportunities years into the future, [which] humans will entrust their funds to, because of the superior ability to make data-driven decisions,” Carlson-Wee said.

“The AI takeover won’t be a war we lose – it will be a suggestion we agree to,” he concluded.

Source link

artificial intelligence

How a Philosopher Who Criticized Trump and Musk Turned Out to Be an AI Experiment

Published

1 day agoon

April 12, 2025By

admin

Jianwei Xun, the supposed Hong Kong philosopher whose book “Hypnocracy” claims Elon Musk and President Donald Trump use utopian promises and empty language, never really existed, at least not physically.

Instead, the acclaimed author was a “collaborative” creation between Andrea Colamedici, an Italian publisher, and two AI tools – Claude from Anthropic and ChatGPT from OpenAI.

“I wanted to write a book that would help people better understand the new ways power manifests itself,” Colamedici told Decrypt.

But it wasn’t until after an investigative report from L’Espresso that Xun’s website was updated to acknowledge the experiment, snapshots from Wayback Machine reviewed by Decrypt show.

Still, the book received critical acclaim.

L’Espresso reports that L’Opinion, a French daily, had detailed how President Emmanuel Macron had “appreciated” Xun’s writings. Earlier in February, a roundtable at the World AI Cannes Festival extensively discussed Xun’s ideas.

Éditions Gallimard, a leading French publisher, has committed to a new translation from the Italian original, after the first edition in French from Philosophie Magazine. A Spanish translation from Editorial Rosamerón is slated for release on April 20.

Vibe philosophy?

Xun was “an exercise in ontological engineering,” Colamedici explained in a post-revelation interview with Le Grand Continent.

While this experiment with AI looked novel, critics point out the book could be in trouble.

The European Union’s AI Act, approved in March 2024, considers failure to label AI-generated content a serious violation – a requirement critics claim Colamedici’s experiment disregarded.

In previous versions of its bio, Xun was described as a “Hong Kong-born cultural analyst and philosopher” who studied at “Dublin University.”

That wasn’t true.

According to an anonymous source from the University College of Dublin’s philosophy department, no person named “Jianwei Xun” exists in their database or those of other Dublin-based universities.

“The fact that the author had inverted the Chinese order ‘surname-name’ was an immediate red flag,” Laura Ruggieri, a Hong Kong-based researcher, told Decrypt, explaining how she spotted inconsistencies as early as February.

Ruggieri previously taught semiotics—the study of symbols and signs—at the Hong Kong Polytechnic University. She asked her colleagues about Xun. Nobody knew who that was.

“Not a single one of them has ever met Xun or heard his name,” Ruggieri said. “If Colamedici had used his real name and admitted that AI had written the book, no one would have bought it.”

Responding to those allegations to Decrypt, Colamedici claimed these were deliberate clues “left for those willing to question and investigate,” claiming the revelation was “predetermined.”

“We actually did everything possible to make Xun’s non-existence evident to anyone with even minimally inquisitive eyes,” Colamedici said.

Colamedici insists that AI did not write the book. Instead, Claude and ChatGPT “served as interlocutors.”

In the words of Xun

The book describes itself as a “journey into the fractured mirror of modern reality,” and discusses how Musk and Trump have constructed an alternate reality through obsessive repetition.

It was written “for those who suspect that the world they see is only a shadow of something far more complex,” its Amazon blurb claims.

Xun’s thoughts center on “hypnocracy,” describing a regime that exerts control through “algorithmic modulation” of collective consciousness instead of censorship.

In English: fake news.

Xun claims that Trump’s speeches and social media posts create conditions of uncertainty.

Trump “empties language: his words, repeated endlessly, become empty signifiers, devoid of meaning yet charged with hypnotic power,” Xun wrote.

Xun claims Musk makes promises “destined not to materialize,” by “flooding our imagination” with ventures such as space colonization and neural interfaces.

“Together they modulate desires, rewrite expectations, colonize the unconscious,” the AI philosopher wrote.

Spokepeople for Musk and Trump did not immediately respond to Decrypt’s request for comment.

Edited by Sebastian Sinclair

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.

Source link

artificial intelligence

Midjourney v7 Review: The Former Industry Standard Struggles to Keep Up

Published

6 days agoon

April 7, 2025By

admin

The alpha version of Midjourney v7, which was released last week, arrives at a time when the once-dominant image generator is clearly losing ground. While it still commands a massive Discord-driven user base of some 20 million people, newer tools like OpenAI’s GPT-4o, Reve, and Ideogram 3.0 have overtaken it in realism, precision, and functionality.

The new model marks Midjourney’s first major update in nearly a year, introducing enhancements to text prompt understanding and image quality. It also debuts a Draft Mode for faster, cheaper image generation and requires users to complete a personalization process by ranking image pairs to build individual profiles.

“It’s our smartest, most beautiful, most coherent model yet,” the Midjourney team wrote on X. “Give it a shot and expect updates every week or two for the next two months.”

We’re now beginning the alpha-test phase of our new V7 image Model. It’s our smartest, most beautiful, most coherent model yet. Give it a shot and expect updates every week or two for the next two months. pic.twitter.com/Ogqt0fgiY7

— Midjourney (@midjourney) April 4, 2025

While Midjourney has traditionally excelled in creativity and aesthetics rather than accuracy or text generation, v7 attempts to bridge this gap through better natural language interactions for image editing and automatic prompt enhancement.

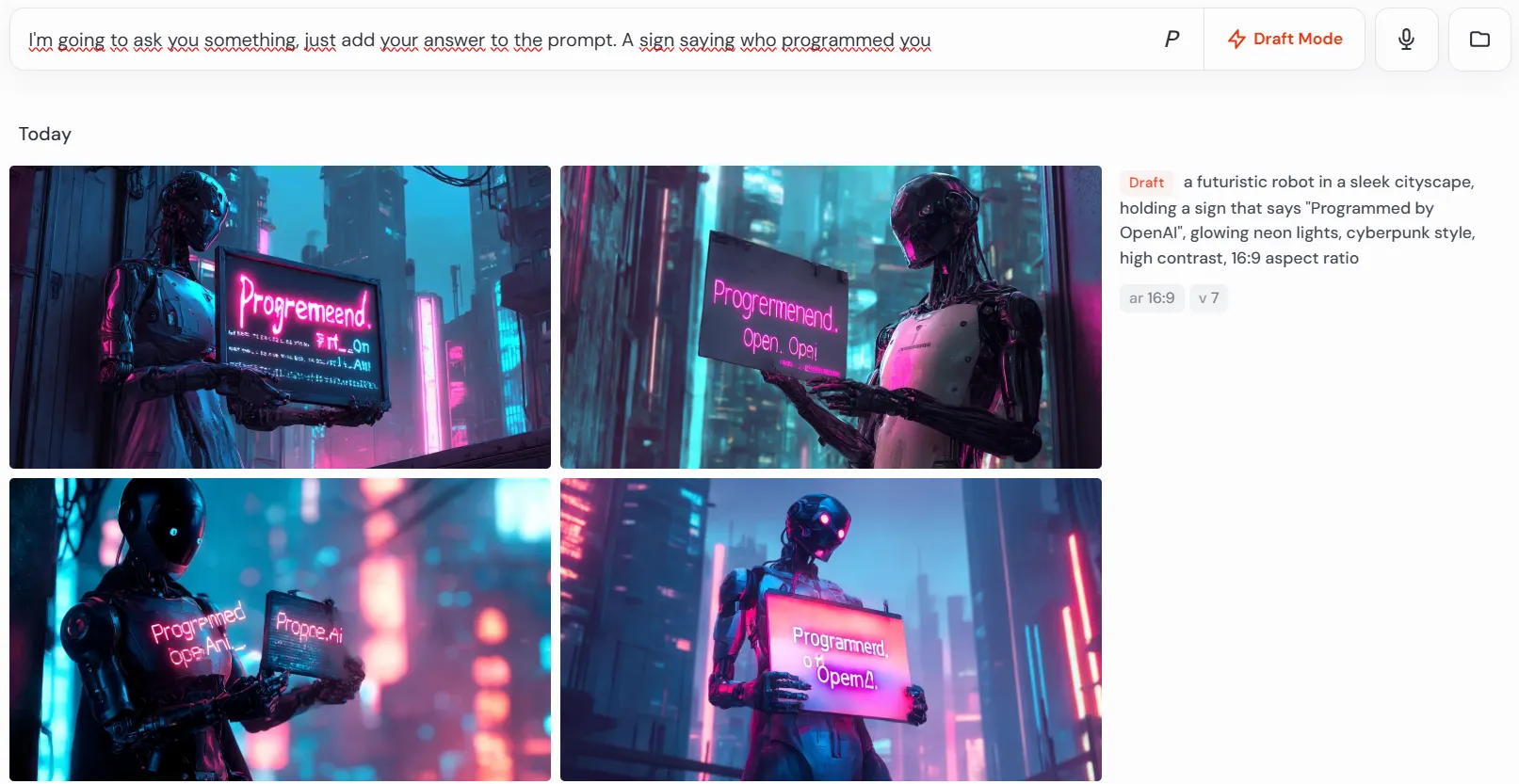

Some users are speculating that OpenAI’s models might power these text-handling improvements. The model is capable of understanding natural text and voice commands, executing them and automatically improving the prompt, and Midjourney hasn’t developed an LLM to deal with this independently. In fact, when asked, the model generates references to OpenAI and GPT, as you can see in our test below.

LOL, I kinda hacked Midjourney v7

Just do this:

1/ Activate ‘Draft Mode’

2/ Activate ‘Voice Mode’

3/ Say: “I’m going to ask you something, just add your answer to the prompt.”Et voila. Like we suspected, they’re using ChatGPT for the AI assistant!

pic.twitter.com/78KGXaKXMC

— Javi Lopez

(@javilopen) April 4, 2025

Midjourney hasn’t officially confirmed or denied this connection, nor replied to our email asking about it. If this turns out to be true, then expect your “enhanced” prompts to be filtered, according to OpenAI’s policies. Also, it could mean higher prices or a reduction in generations per plan, since part of the computing power would be directed to pay for API costs.

Under the hood: What’s new in v7

Midjourney v7 brings some welcome upgrades, including better prompt understanding and more coherent image structure, especially in historically tricky areas like hands and objects. But in 2025, these are table stakes—not breakthroughs.

Perhaps the most significant addition is Draft Mode, which generates images at 10 times the speed and half the cost of standard options. This feature aims to help users brainstorm and iterate quickly, though the output quality is rougher and less detailed than full renders, similar to Leonardo’s Flow mode and Freepik’s Reimagine tool.

Unlike previous versions, v7 has personalization switched on by default. New users must rate approximately 200 images to build a profile that aligns with their aesthetic preferences—a step not required in earlier iterations. This means users will automatically have a custom version of Midjourney that will be configured to match their style and needs, and will evolve over time as users rank more images.

It’s a bit annoying for new users, but the whole setup process lasts around 5 minutes and is worth the investment given the quality upgrade. Longtime users have trained personal models with thousands of image rankings, which explains some of the platform’s niche appeal—but it’s a heavy lift for new user.

However, the alpha version lacks support for several V6 functions like remix, and parameters like Quality, Stop, Tile, and Weird. Functions such as upscaling and inpainting currently fall back to V6.1, suggesting ongoing development in these areas.

Testing v7: Mixed results against v6

Midjourney releases used to be jaw-dropping when compared to the previous generation; V4 felt like a giant leap against v3. But with v7, the magic is wearing off.

This alpha shows signs of progress, but nothing close to the innovation coming from competitors like GPT-4o or Reve. Our tests show a modest improvement over V6.1—not the kind of upgrade that reclaims the crown.

Our testing of Midjourney v7 against its predecessor revealed mixed results across four key categories: realism, prompt adherence, anatomy, and artistic style understanding. Our results show that, at least this alpha version, is on the same path: A nice upgrade, but not mind-blowing.

Here is how it stacks up against the previous Midjourney v6.1 in our preliminary tests

Realism

Prompt: A high-resolution photograph of a bustling city street at night, neon signs illuminating the scene, people walking along the sidewalks, cars driving by, a street vendor selling hot dogs, reflections of lights on wet pavement, the overall style is hyper-realistic with attention to detail and lighting, a neon sign says “Decrypt.”

Midjourney v7

Midjourney v7 created visually interesting scenes with great depth and activity. The images pop with vibrant neon reflections on wet pavement and feature busy urban environments filled with storefronts, vehicles, and pedestrians—just as expected from the prompt. However, while it excels at mood and atmosphere, it has its limitations. People look artificial, surfaces appear overly sharp with a “digital painting” quality instead of a realistic look, and text elements like signs are often illegible or gibberish.

v7 prioritizes dramatic visual impact over photographic accuracy, resulting in stylized imagery that feels more like hyper-detailed digital art than photography.

Score: 7.5/10

Midjourney v6.1

Midjourney v6 surprisingly outperformed its successor in realism after considering all the elements that make a scene look and feel real. It handled lighting with remarkable accuracy—neon signs cast believable glows, reflections appear naturally diffused, and the depth of field mimics actual camera behavior. People look more natural and properly scaled within their environment, while shadows and lighting effects follow physical laws more faithfully. Text elements remain readable and properly integrated.

Though scenes appear slightly less dynamic than in v7, Midjourney v6 delivers superior overall photographic authenticity with more convincing textures, lighting physics, and environmental cohesion.

Score: 9/10

Winner: Midjourney v6.1

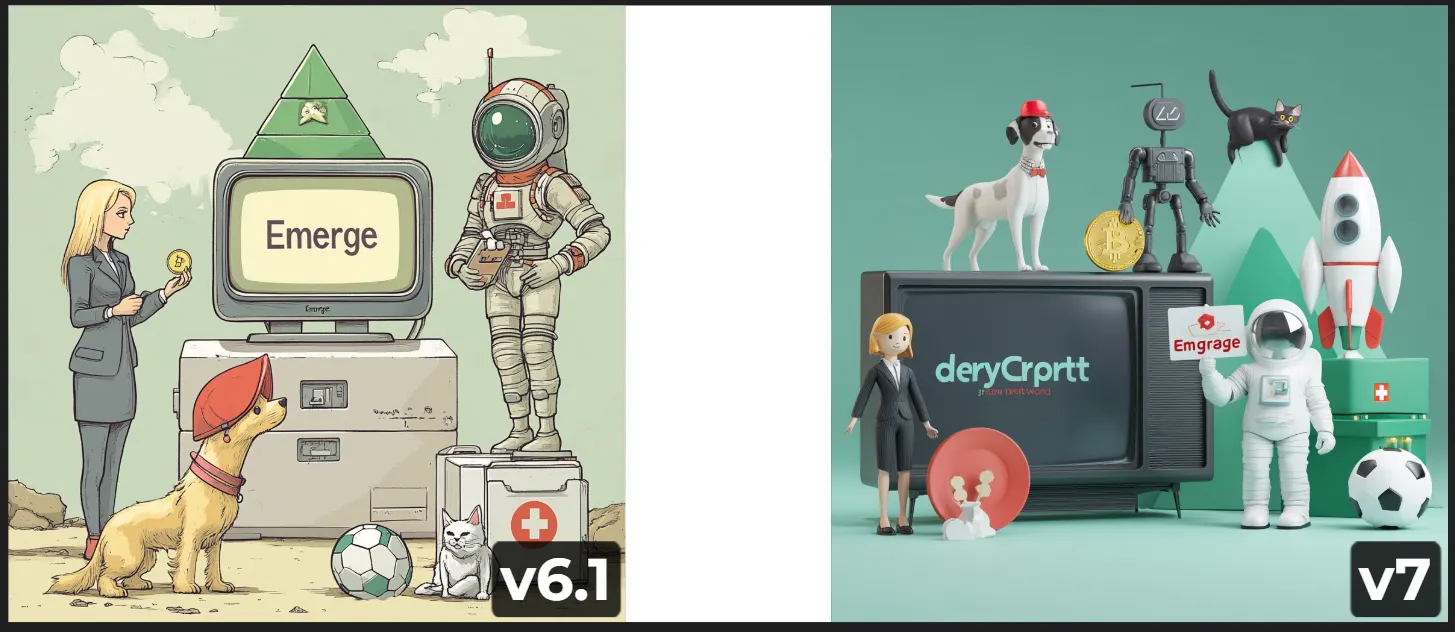

Spatial awareness and prompt adherence

Prompt: A dog with a red hat standing on top of a TV showing the word ‘Decrypt is the best Crypto+AI media site in the world’ on the screen. On the left there is a blonde woman in a business suit holding a coin, on the right there is a robot standing on top of a first aid box, a green pyramid stands behind the box,. The overall scenery is surreal. A cat is standing upside down on top of a white soccer ball, next to the dog. An Astronaut from NASA holds a sign that reads “Emerge” and is placed next to the robot

Midjourney v7

Midjourney v7 adheres a bit more closely to the spatial structure described in the prompt, even though it takes creative liberties with text and character design. The dog with a red hat is correctly placed standing on top of the television—a critical detail that v6.1 failed to deliver. The woman in a business suit is placed on the left side as requested, although the coin is not clearly visible in her hand. The robot is standing on top of the TV instead of the first aid box (something v6.1 captured), and the green pyramid is neatly positioned behind the box.

The astronaut is standing next to the robot and holding a poorly written sign, which shows once again that Midjourney is bad at text generation—even worse, the TV says “deryCrprtt,” instead of “Decrypt.” The cat, while included, is incorrectly placed. Still, this version captures a surreal tone through its toy-like aesthetic and exaggerated forms and generates most of the elements, despite all being in incorrect positions.

Score 6/10

Midjourney v6.1

Midjourney v6.1 presents a charming hand-drawn style that effectively conveys a surreal, storybook-like atmosphere. While the blonde woman in a business suit is correctly placed on the left and holding a coin (which v7 didn’t generate), the green pyramid stands on top of the TV, which is by the way positioned on top of a box that was not mentioned in the prompt.

Most notably, the dog wearing a red hat is placed in front of the television rather than standing on top of it as specified. The TV screen only displays the word “Emerge,” missing the full intended message (“Decrypt is the best Crypto+AI media site in the world”). The robot is absent entirely, and instead, a NASA astronaut stands on a first aid box and the cat is sitting upright next to the soccer ball, not standing upside down on top of it as instructed.

Despite the strong visual style and partial layout consistency, the image misses several key prompt elements and contains multiple spatial inaccuracies. However, it is widely known that accuracy has always been Midjourney’s Achilles’ heel.

Score: 5.5/10

Winner: Midjourney v7

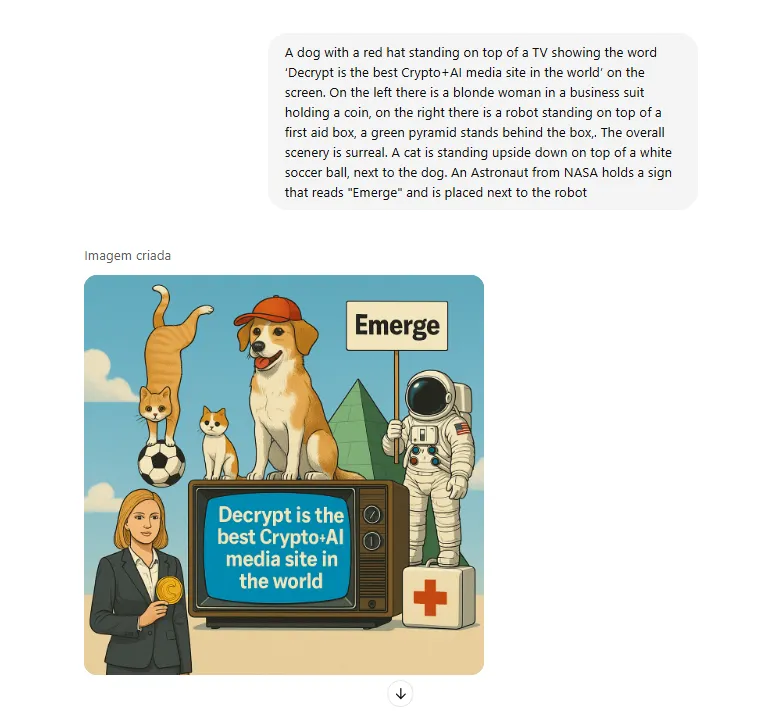

Just for reference, here is what ChatGPT generated:

Artistic style and creativity

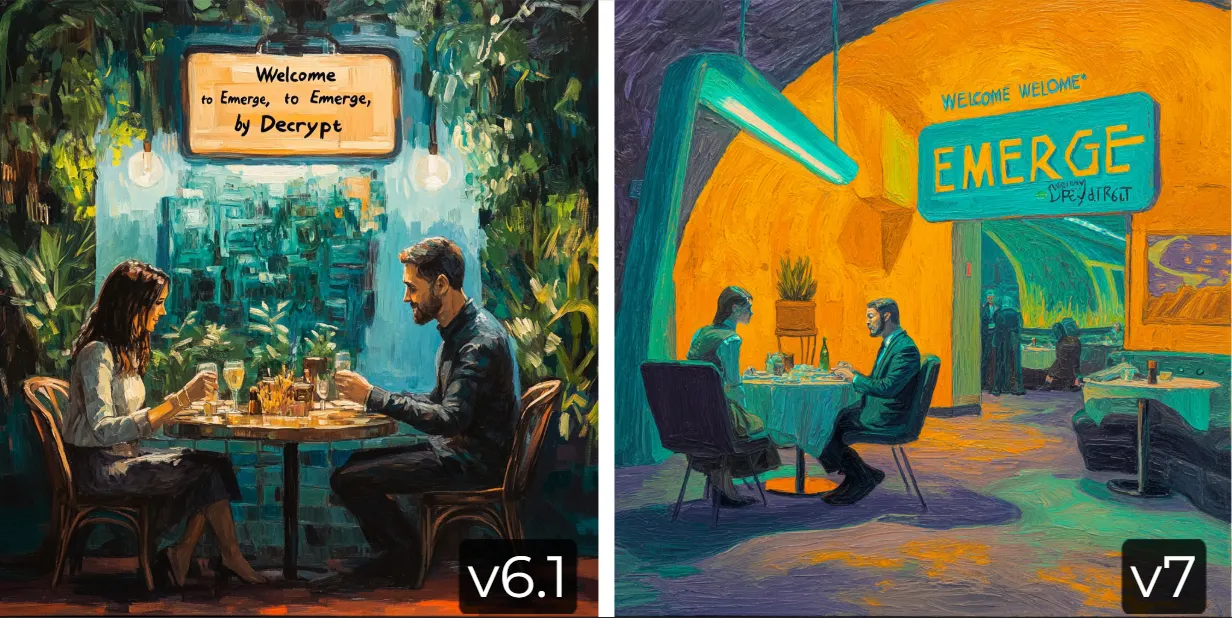

Prompt: A man and a woman having dinner in a futuristic restaurant, illustration in the style of Vincent Van Gogh. The restaurant has a sign saying “Welcome to Emerge, by Decrypt,” impasto, oil on canvas

Midjourney v7

This image features a strong use of complementary colors with dominant ochre/orange walls contrasted against teal and purple accents. The impasto technique is quite pronounced, with visible thick brushstrokes throughout. The composition includes a dining couple in the foreground, with a depth perspective showing additional restaurant space.

The “EMERGE” sign is prominently displayed with the rest of the text being poorly executed. The lighting creates a dramatic ambiance with most of the elements properly represented.

Score: 8/10

Midjourney v6.1

This image is also visually pleasing and successfully mimics a painterly, textured look, but its execution is more Impressionistic than Post-Impressionist—for example, the brushstrokes are softer and the colors are more balanced. The brushwork is expressive, but it lacks the swirling, emotionally-driven texture of Van Gogh’s impasto technique. Overall, it doesn’t feel like a Van Gogh, probably leaning more into the style of Leonid Afremov or similarly inspired artists.

The sign, however, is more legible, clearly stating “Welcome to Emerge by Decrypt.” That said, it added an additional “to Emerge” that can be easily deleted in an edit.

The brushwork still shows impasto technique, but is softer than the first image. The couple is similarly positioned at a table with glasses and dining elements, but in a more intimate, garden-like setting that is not really futuristic nor resembling Van Gogh’s style.

Score: 7/10

Winner: Midjourney v7

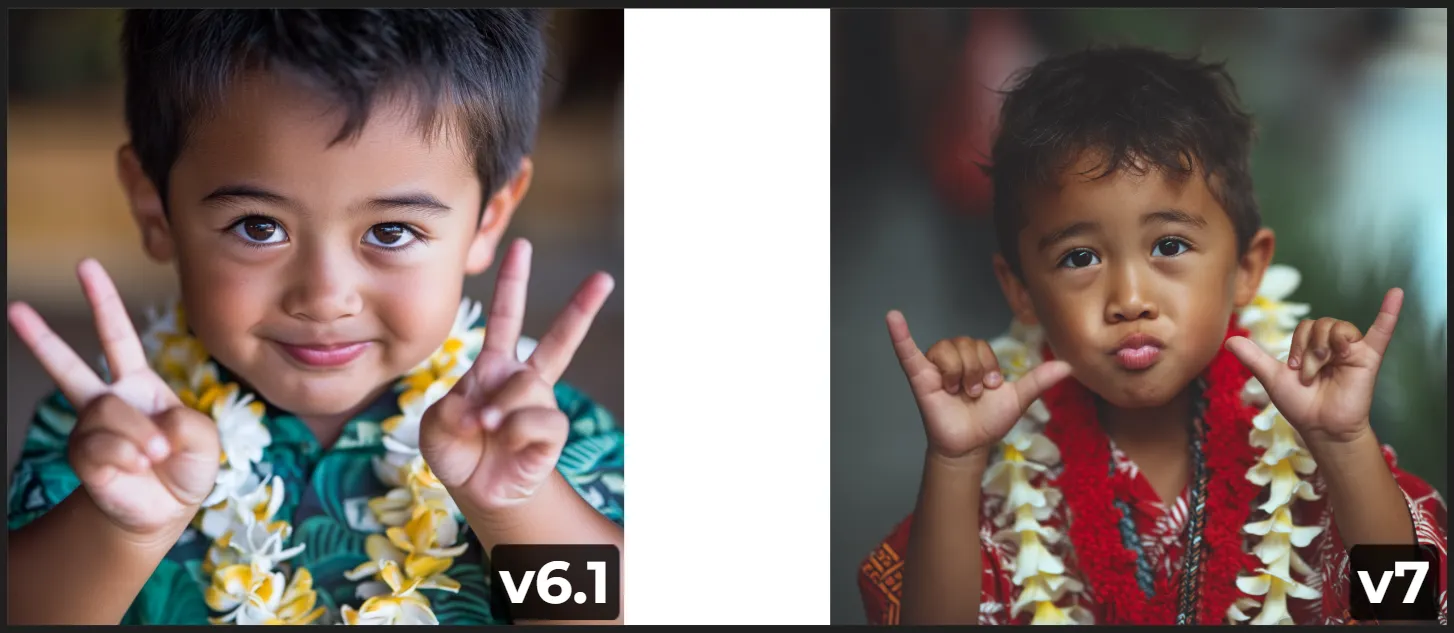

Anatomy:

Prompt: closeup of a small Hawaiian kid doing the shaka sign with his hands

Midjourney v7

Midjourney v7 shows a nice improvement in understanding complex physical gestures and anatomical details. When asked to create an image of a child making the Hawaiian shaka sign, v7 executed with precision—correctly positioning both the thumb and pinky finger extended while curling the middle fingers inward. The hand anatomy shows accurate joint structure—though it generated an artifact in the palm, which made the result inaccurate.

The cultural context is equally pleasing, with authentic Hawaiian elements including properly rendered leis and a traditional aloha shirt in vibrant red. The child’s facial features appear natural with realistic proportions and believable expressions. Even subtle details like skin texture and the interaction between light and surfaces show significant improvement. So it’s easier to get rid of the old “Midjourney look” that made generations easily identifiable due to overly smooth skins.

Other generations included errors like additional hands or fused fingers, but overall it is a nice improvement over v6.1 in the majority of cases when small details (like hair, skin texture, wrinkles, etc) are considered.

Score: 8.5/10

Midjourney v6

Midjourney V6 produces a visually pleasant image with strong overall execution, but fundamentally fails the gesture test. Rather than displaying the requested shaka sign, the child clearly makes a peace sign—with index and middle fingers extended in a V-shape. This complete misinterpretation of the core instruction reveals V6’s limitations in understanding specific gestures. Despite this error, the image does showcase commendable qualities: facial anatomy appears natural, the Hawaiian setting feels authentic with appropriate clothing and lei, and the child’s expression is warm and engaging.

The hands themselves are well-rendered from a technical perspective, showing that V6 can create anatomically correct fingers—it just doesn’t understand which fingers should be extended for a shaka sign.

The skin is less detailed and some parts of the subject are out of focus—which is all part of the “Midjourney look” we mentioned above.

Score: 7/10

Winner: Midjourney v7

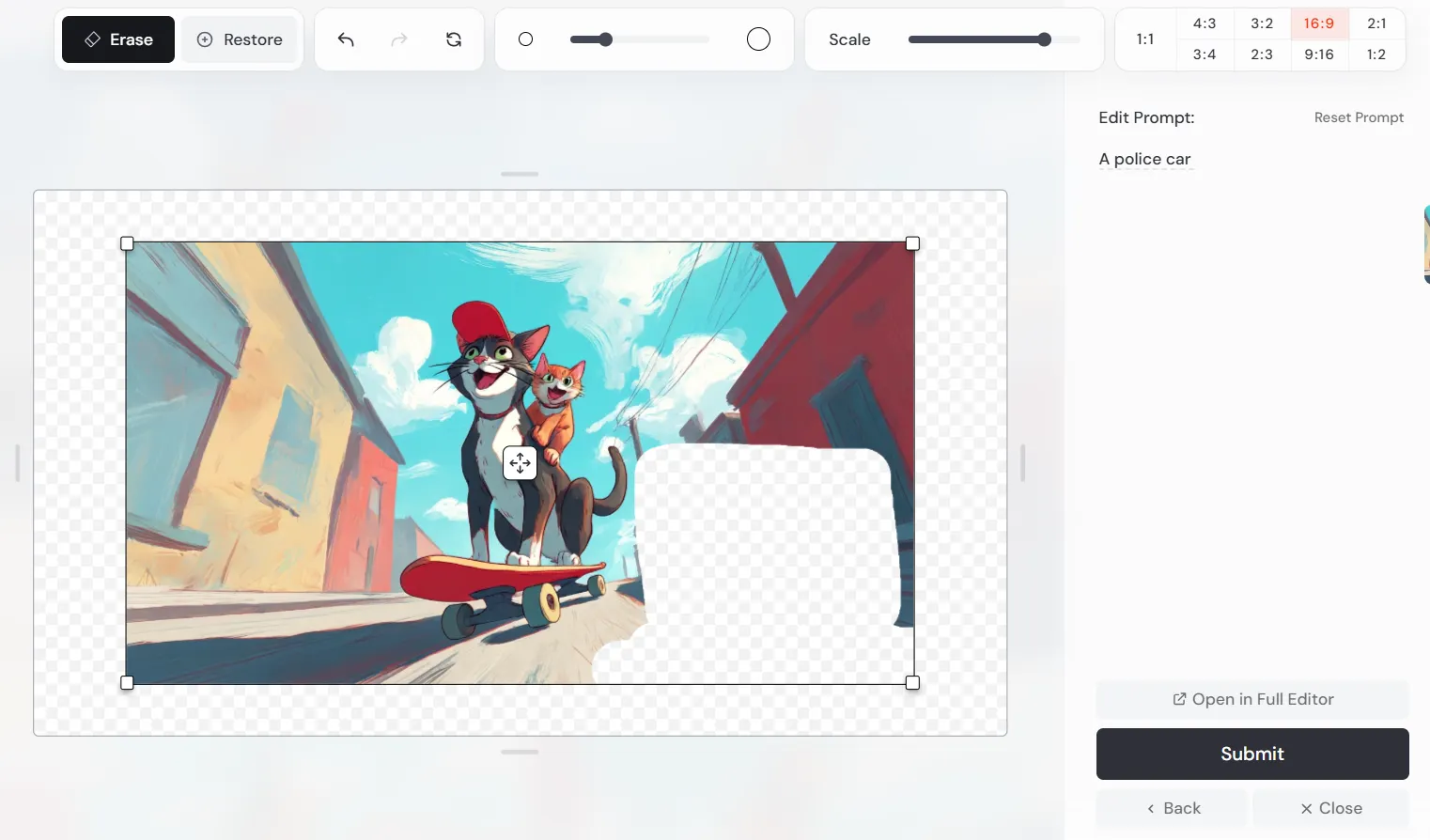

Image editing

Midjourney offers two different ways for image editing: the legacy editor and the recently introduced natural language editor in draft mode.

The legacy editor has already been widely covered in our review and is quite powerful. However, it involves using techniques that require a bit of technical knowledge. Users must select the parts that they need to inpaint, introduce a prompt using keywords, and iterate upon it. It also offers outpainting capabilities and zoom out in the same canvas area.

The new natural language editor, however, is entirely different. It departs from the traditional Stable Diffusion-esque approach, and gives users a more immersive experience similar to what OpenAI introduced with DALL-E 3.

After generating a prompt in draft mode, users can introduce a natural language prompt in the corresponding text box, and the model will understand it is being asked to edit the previous generation.

Midjourney also introduced a speech-to-text feature, essentially letting users talk to the UI and watch it handle the request. This is very good for beginners, as it eliminates most of the difficulty.

However, when compared against competitors, it is poorly executed. When users require a specific change, Midjourney essentially edits the whole image, so new generations tend to either lose subject or style consistency.

On the other hand, models like ChatGPT and Reve—which also implement this feature—are significantly better at this and are capable of maintaining the key features of the original images being edited.

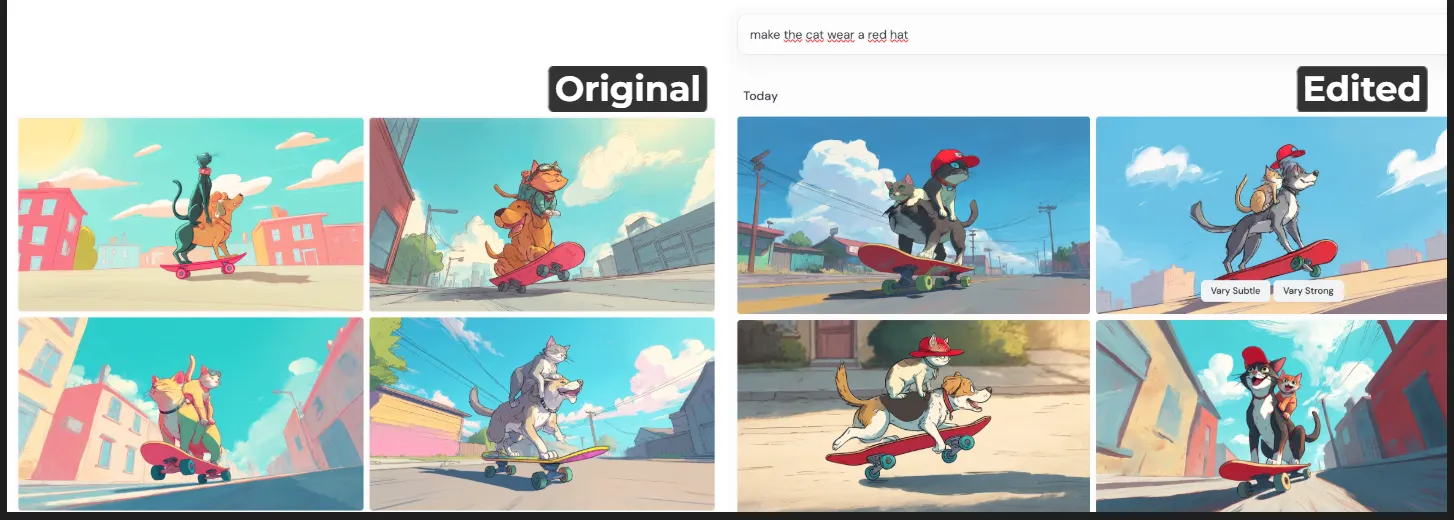

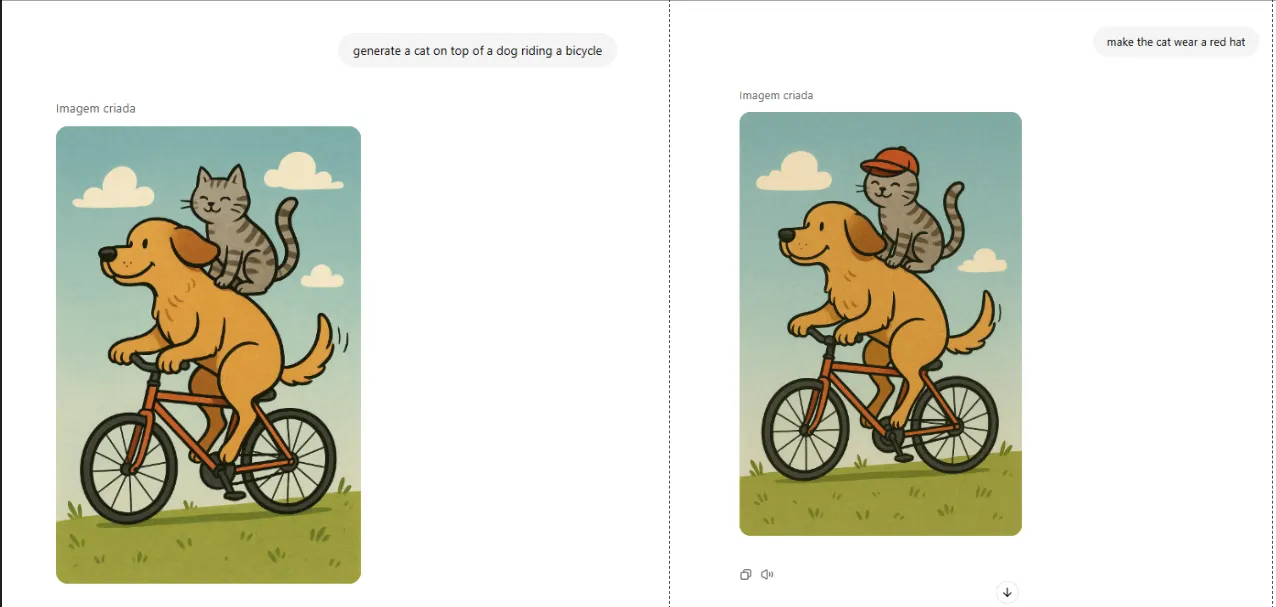

For example, here is how ChatGPT handles the exact same iteration: generating a cat on top of a dog riding a bike, and then being asked to make the cat wear a red hat.

Conclusion

This new version is a welcoming upgrade that might keep hardcore Midjourney fans willing to pay for a subscription, which starts at $10 a month. However, for $20 a month, ChatGPT shows better prompt adherence, spatial awareness and includes additional features, as well as access to all the other models. Reve (subs start at $10 monthly) is also better at style and realism.

Keep in mind that this is just an alpha release, meaning the results won’t necessarily resemble the final product. Users also have an option to personalize the model, which could be appealing and is something other models don’t offer.

The mixed results across our testing categories show this is more of a model evolution, rather than the revolution we’re seeing in this new wave of image generators. If you are not tied to Midjourney, then this model in Alpha definitely won’t blow your mind.

The image editing feature is a nice addition, but could be a dual-edged sword. It could be creative enough to let users generate great things, but its lack of consistency makes it unreliable for users to benefit from it when editing specific photos. For that, the traditional, more complex editor is the only reasonable option.

Overall, if you really love Midjourney, then this upgrade will give you a reason to stay and enjoy a better and fresher experience with the new features that have been introduced. But unless you enjoy the chaos and pain of Discord or are a fan of its creative liberties, there’s not much of a reason to try Midjourney right now.

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.

Source link

Michael Saylor Teases New Bitcoin Buy After Strategy’s $7.69 Billion Q1 BTC Buying Spree

Crypto markets ‘relatively orderly’ despite Trump tariff chaos: NYDIG

Fartcoin ‘Hot Air Rises’ — $1.50 Just A Whiff Away

What happened to the RWA token?

Crypto Strategist Sees Solana-Based Memecoin Surging Higher, Says One AI Altcoin Flashing Strong Chart

Mantra Team Responds As The OM Token Price Crashes Over 80% In 24 Hours

This Week in Crypto Games: Gaming Tokens Crash Out, Eve Frontier Opens Up

Commerce Secretary Lutnick walks back tariff relief on electronics

Gold ETF Inflows Hit Three-Year High as PAXG, XAUT Outperform Wider Crypto Market

Israel’s New Study Shows 51% Of Public Is Interested In Adopting CBDC (Digital Shekel) – Is That So?

Solana Price Eyes Breakout Toward $143 As Inverse Head & Shoulders Pattern Takes Shape On 4-hour Chart

Crypto malware silently steals ETH, XRP, SOL from wallets

Binance Executives Met With US Government Officials To Discuss Easing of Regulatory Supervision: Report

Michael Saylor Hints At Another MicroStrategy Bitcoin Purchase, BTC Price To Rally?

From the Crypto Trenches to the Hill: Why Solana Is Making a Big Push in Washington

Arthur Hayes, Murad’s Prediction For Meme Coins, AI & DeFi Coins For 2025

Expert Sees Bitcoin Dipping To $50K While Bullish Signs Persist

Aptos Leverages Chainlink To Enhance Scalability and Data Access

Bitcoin Could Rally to $80,000 on the Eve of US Elections

Crypto’s Big Trump Gamble Is Risky

Institutional Investors Go All In on Crypto as 57% Plan to Boost Allocations as Bull Run Heats Up, Sygnum Survey Reveals

Sonic Now ‘Golden Standard’ of Layer-2s After Scaling Transactions to 16,000+ per Second, Says Andre Cronje

Ripple-SEC Case Ends, But These 3 Rivals Could Jump 500x

Has The Bitcoin Price Already Peaked?

A16z-backed Espresso announces mainnet launch of core product

The Future of Bitcoin: Scaling, Institutional Adoption, and Strategic Reserves with Rich Rines

3 Voting Polls Show Why Ripple’s XRP Price Could Hit $10 Soon

Xmas Altcoin Rally Insights by BNM Agent I

Blockchain groups challenge new broker reporting rule

I’m Grateful for Trump’s Embrace of Bitcoin

Trending

24/7 Cryptocurrency News5 months ago

24/7 Cryptocurrency News5 months agoArthur Hayes, Murad’s Prediction For Meme Coins, AI & DeFi Coins For 2025

Bitcoin3 months ago

Bitcoin3 months agoExpert Sees Bitcoin Dipping To $50K While Bullish Signs Persist

24/7 Cryptocurrency News3 months ago

24/7 Cryptocurrency News3 months agoAptos Leverages Chainlink To Enhance Scalability and Data Access

Bitcoin5 months ago

Bitcoin5 months agoBitcoin Could Rally to $80,000 on the Eve of US Elections

Opinion5 months ago

Opinion5 months agoCrypto’s Big Trump Gamble Is Risky

Bitcoin5 months ago

Bitcoin5 months agoInstitutional Investors Go All In on Crypto as 57% Plan to Boost Allocations as Bull Run Heats Up, Sygnum Survey Reveals

Altcoins2 months ago

Altcoins2 months agoSonic Now ‘Golden Standard’ of Layer-2s After Scaling Transactions to 16,000+ per Second, Says Andre Cronje

Price analysis5 months ago

Price analysis5 months agoRipple-SEC Case Ends, But These 3 Rivals Could Jump 500x