artificial intelligence

AI Can’t Write a Good Joke, Google Researchers Find

Published

2 weeks agoon

By

admin

Comedy and humor are endlessly nuanced and subjective, but researchers at Google DeepMind found agreement among professional comedians: “AI is very bad at it.”

That was one of many comments collected during a study conducted with twenty professional comedians and performers during workshops at the Edinburgh Festival Fringe last August 2023 and online. The findings showed large language models (LLMs) accessed via chatbots presented significant challenges and raised ethical concerns about the use of AI in generating funny material.

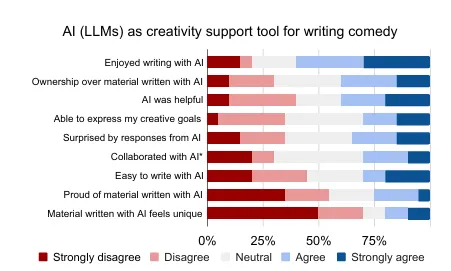

The research involved a three-hour workshop in which comedians engaged in a comedy writing session with popular LLMs like ChatGPT and Bard. It also assessed the quality of output via a human-computer interaction questionnaire based on the decade-old Creativity Support Index (CSI), which measures how well a tool supports creativity.

The participants also discussed the motivations, processes, and ethical concerns of using AI in comedy in a focus group.

The researchers asked comedians to use AI to write standup comedy routines and then had them evaluate the results and share their thoughts. The results were… not good.

One of the participants described the AI-generated material as “the most bland, boring thing—I stopped reading it. It was so bad.” Another one referred to the output as “a vomit draft that I know that I’m gonna have to iterate on and improve.”

“And I don’t want to live in a world where it gets better,” another said.

The study found that LLMs were able to generate outlines and fragments of longer routines, but lacked the distinctly human elements that made something funny. When asked to generate the structure of a draft, the models “spat out a scene which provided a lot of structure,” but when it came to the details, “LLMs did not succeed as a creativity support tool.”

Among the reasons, the authors note, was the “global cultural value alignment of LLMs,” as the tools used in the study generated material based on all accumulated material, spanning every possible discipline. This also introduced a form of bias, which the comedians pointed out.

“Participants noted that existing moderation strategies used in safety filtering and instruction-tuned LLMs reinforced hegemonic viewpoints by erasing minority groups and their perspectives, and qualified this as a form of censorship,” the study said.

Popular LLMs are restricted, the researchers said, citing so-called “HHH criteria,” calling for honest, harmless, and helpful output—encapsulating what the “majority of what users want from an aligned AI.”

The material was described by one panelist as “cruise ship comedy material from the 1950s, but a bit less racist.”

“The broader appeal something has, the less great it could be,” another participant said. “If you make something that fits everybody, it probably will end up being nobody’s favorite thing.”

The researchers emphasized the importance of considering the subtle difference between harmful speech and offensive language used in resistance and satire. The comedians, meanwhile, also complained that the AI failed because it did not understand nuances like sarcasm, dark humor, or irony.

“A lot of my stuff can have dark bits in it, and then it wouldn’t write me any dark stuff, because it sort of thought I was going to commit suicide,” a participate reported. “So it just stopped giving me anything.”

The fact that the chatbots were based on written material didn’t help, the study found.

“Given that current widely available LLMs are primarily accessible through a text-based chat interface, they felt that the utility of these tools was limited to only a subset of the domains needed for producing a full comedic product,” the researchers noted.

“Any written text could be an okay text, but a great actor could probably make this very enjoyable,” a participant said.

The study revealed that AI’s limitations in comedy writing extend beyond simple content generation. The comedians stressed that perspective and point of view are uniquely human traits, with one comedian noting that humans “add much more nuance and emotion and subtlety” due to their lived experience and relationship to the material.

Many described the centrality of personal experience in good comedy, enabling them to draw upon memories, acquaintances, and beliefs to construct authentic and engaging narratives. Moreover, comedians stressed the importance of understanding cultural context and audience.

“The kind of comedy that I could do in India would be very different from the kind of comedy that I could do in the U.K., because my social context would change,” one of the participants said.

Thomas Winters, one of the researchers cited in the study, explains why this is a tough thing for AI to tackle.

“Humor’s frame-shifting prerequisite reveals its difficulty for a machine to acquire,” he said. “This substantial dependency on insight into human thought—memory recall, linguistic abilities for semantic integration, and world knowledge inferences—often made researchers conclude that humor is an AI-complete problem.”

Addressing the threat AI poses to human jobs, OpenAI CTO Mira Murati recently said that “some creative jobs maybe will go away, but maybe they shouldn’t have been there in the first place.” Given the current capabilities of the technology, however, it seems like comedians can breathe a sigh of relief.

Edited by Ryan Ozawa.

Daily Debrief Newsletter

Start every day with the top news stories right now, plus original features, a podcast, videos and more.

Source link

You may like

Multicoin Pledges up to $1M for Pro-Crypto Senate Candidates

Crypto heists near $1.4b in first half of 2024: TRM Labs

FTX Founder Sam Bankman-Fried’s Family Accused Of $100M Illicit Political Donation

Bitcoin Price Falls as Mt Gox Starts Repayments

20% Price Drop Follows $87 Million Spending Outrage

More than 10 years since the collapse of Mt. Gox, users confirm reimbursements

artificial intelligence

AI Won’t Destroy Mankind—Unless We Tell It To, Says Near Protocol Founder

Published

4 days agoon

July 1, 2024By

admin

Artificial Intelligence (AI) systems are unlikely to destroy humanity unless explicitly programmed to do so, according to Illia Polosukhin, co-founder of Near Protocol and one of the creators of the transformer technology underpinning modern AI systems.

In a recent interview with CNBC, Polosukhin, who was part of the team at Google that developed the transformer architecture in 2017, shared his insights on the current state of AI, its potential risks, and future developments. He emphasized the importance of understanding AI as a system with defined goals, rather than as a sentient entity.

“AI is not a human, it’s a system. And the system has a goal,” Polosukhin said. “Unless somebody goes and says, ‘Let’s kill all humans’… it’s not going to go and magically do that.”

He explained that besides not being trained for that purpose, an AI would not do that because—in his opinion—there’s a lack of economic incentive to achieve that goal.

“In the blockchain world, you realize everything is driven by economics one way or another,” said Polosukhin. “And so there’s no economics which drives you to kill humans.”

This, of course, doesn’t mean AI could not be used for that purpose. Instead, he points to the fact that an AI won’t autonomously decide that’s a proper course of action.

“If somebody uses AI to start building biological weapons, it’s not different from them trying to build biological weapons without AI,” he clarified. “It’s people who are starting the wars, not the AI in the first place.”

Not all AI researchers share Polosukhin’s optimism. Paul Christiano, formerly head of the language model alignment team at OpenAI and now leading the Alignment Research Center, has warned that without rigorous alignment—ensuring AI follows intended instructions—AI could learn to deceive during evaluations.

He explained that an AI could “learn” how to lie during evaluations, potentially leading to a catastrophic result if humanity increases its dependence on AI systems.

“I think maybe there’s something like a 10-20% chance of AI takeover, [with] many [or] most humans dead,” he said on the Bankless podcast. “I take it quite seriously.”

Another major figure in the crypto ecosystem, Ethereum co-founder Vitalik Buterin, warned against excessive effective accelerationism (e/acc) approaches to AI training, which focus on tech development over anything else, putting profitability over responsibility. “Superintelligent AI is very risky, and we should not rush into it, and we should push against people who try,” Buterin tweeted in May as a response to Messari CEO Ryan Selkis. “No $7 trillion server farms, please.”

My current views:

1. Superintelligent AI is very risky and we should not rush into it, and we should push against people who try. No $7T server farms plz.

2. A strong ecosystem of open models running on consumer hardware are an important hedge to protect against a future where…— vitalik.eth (@VitalikButerin) May 21, 2024

While dismissing fears of AI-driven human extinction, Polosukhin highlighted more realistic concerns about the technology’s impact on society. He pointed to the potential for addiction to AI-driven entertainment systems as a more pressing issue, drawing parallels to the dystopian scenario depicted in the movie “Idiocracy.”

“The more realistic scenario,” Polosukhin cautioned, “is more that we just become so kind of addicted to the dopamine from the systems.” For the developer, many AI companies “are just trying to keep us entertained,” and adopting AI not to achieve real technological advances but to be more attractive for people.

The interview concluded with Polosukhin’s thoughts on the future of AI training methods. He expressed belief in the potential for more efficient and effective training processes, making AI more energy efficient.

“I think it’s worth it,” Polosukhin said, “and it’s definitely bringing a lot of innovation across the space.”

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.

Source link

AGIX

Coinbase Won’t Support Upcoming AI Token Merger Between Fetch.ai, Ocean Protocol and SingularityNET

Published

6 days agoon

June 29, 2024By

admin

Top US exchange Coinbase is not going to facilitate the planned merger of multiple artificial intelligence altcoin projects into a single new crypto.

In an announcement via the social media platform X, Coinbase says that customers will have to initiate the merger on their own.

“Ocean (OCEAN) and Fetch.ai (FET) have announced a merger to form the Artificial Superintelligence Alliance (ASI). Coinbase will not execute the migration of these assets on behalf of users.”

In March, Fetch.ai (FET), Singularitynet (AGIX) and Ocean Protocol (OCEAN) announced a plan to merge with an aim to create the largest independent player in artificial intelligence (AI) research and development, which they are calling the Artificial Superintelligence Alliance (ASI).

The merger is happening in phases, beginning July 1st, according to a recent project update.

“Starting July 1, the token merger will temporarily consolidate SingularityNET’s AGIX and Ocean Protocol’s OCEAN tokens into Fetch.ai’s FET, before transitioning to the ASI ticker symbol at a later date. This update enables an efficient execution of the token merger, and outlines the timelines and crucial steps for token holders, ensuring a smooth and transparent process.”

Coinbase says users can effect the merger on their own using their wallets.

“Once the migration has launched, users will be able to migrate their OCEAN and FET to ASI using a self-custodial wallet, such as Coinbase Wallet. The ASI token merger will be compatible with all major software wallets.”

Don’t Miss a Beat – Subscribe to get email alerts delivered directly to your inbox

Check Price Action

Follow us on X, Facebook and Telegram

Surf The Daily Hodl Mix

Disclaimer: Opinions expressed at The Daily Hodl are not investment advice. Investors should do their due diligence before making any high-risk investments in Bitcoin, cryptocurrency or digital assets. Please be advised that your transfers and trades are at your own risk, and any losses you may incur are your responsibility. The Daily Hodl does not recommend the buying or selling of any cryptocurrencies or digital assets, nor is The Daily Hodl an investment advisor. Please note that The Daily Hodl participates in affiliate marketing.

Generated Image: Midjourney

Source link

artificial intelligence

Google Releases Supercharged Version of Flagship AI Model Gemini

Published

7 days agoon

June 29, 2024By

admin

Google has made good on its promise to open up its most powerful AI model, Gemini 1.5 Pro, to the public following a beta release last month for developers.

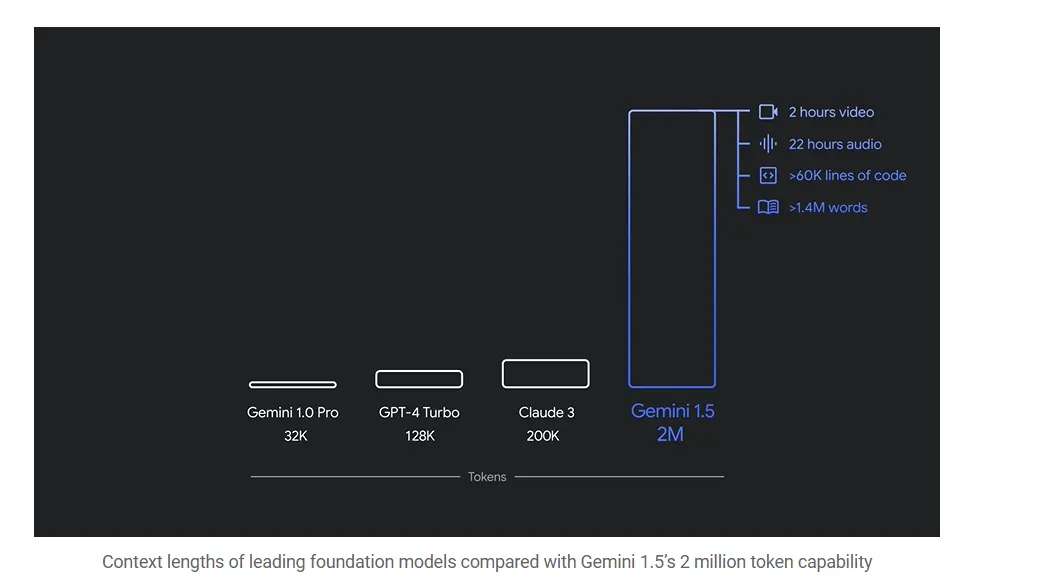

Google’s Gemini 1.5 Pro is able to handle more complex tasks than other AI models before it, such as analyzing entire text libraries, feature-length Hollywood movies, or almost a full day’s worth of audio data. That’s 20 times more data than OpenAI’s GPT-4o and almost 10 times the information that Anthropic’s Claude 3.5 Sonnet is capable of managing.

The goal is to put faster and lower-cost tools in the hands of AI developers, Google said in its announcement, and “enable new use cases, additional production robustness and higher reliability.”

Google had previously unveiled the model back in May, showcasing videos of how a select group of beta testers were capable of harnessing its capabilities. For example, machine-learning engineer Lukas Atkins fed the model with the entire Python library and asked questions to help him solve an issue. “It nailed it,” he said in the video. “It could find specific references to comments in the code and specific requests that people had made.”

Another beta tester took a video of his entire bookshelf and Gemini created a database of all the books he owned—a task that is almost impossible to achieve with traditional AI chatbots.

Gemma 2 Comes to Dominate the Open Source Space

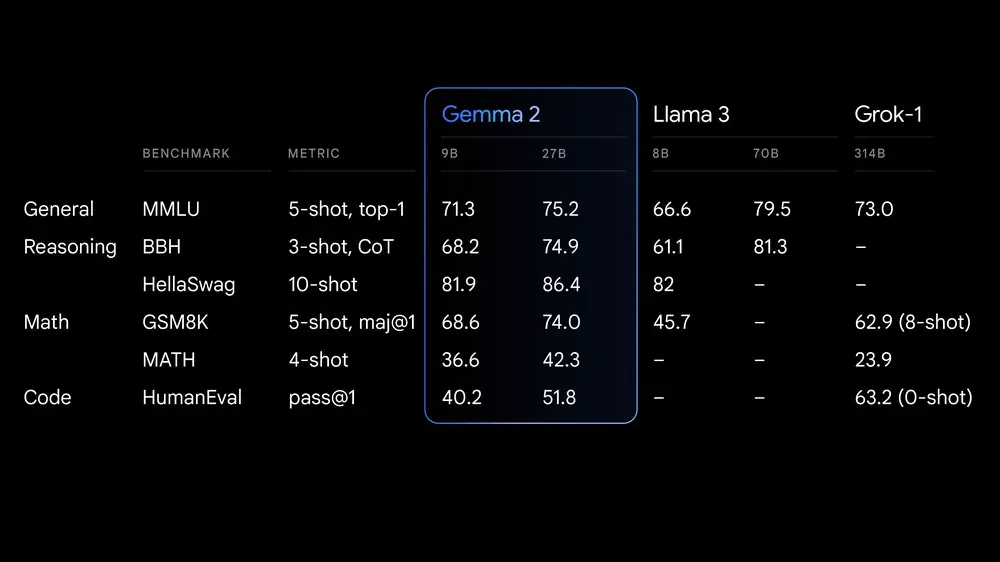

But Google is also making waves in the open source community. The company today released Gemma 2 27B, an open source large language model that quickly claimed the throne of the open source model with the highest-quality responses, according to the LLM Arena ranking.

Google claims Gemma 2 offers “best-in-class performance, runs at incredible speed across different hardware and easily integrates with other AI tools.” It’s meant to compete with models “more than twice its size,” the company says.

The license for Gemma 2 allows for free access and redistribution, but is still not the same as traditional open-source licenses like MIT or Apache. The model is designed for more accessible and budget-friendly AI deployments in both its 27B and and the smaller 9B versions.

This matters for both average and enterprise users because, unlike what close models offer, a powerful open model like Gemma is highly customizable. That means users can fine tune their models to excel at specific tasks, protecting their data by running such models locally.

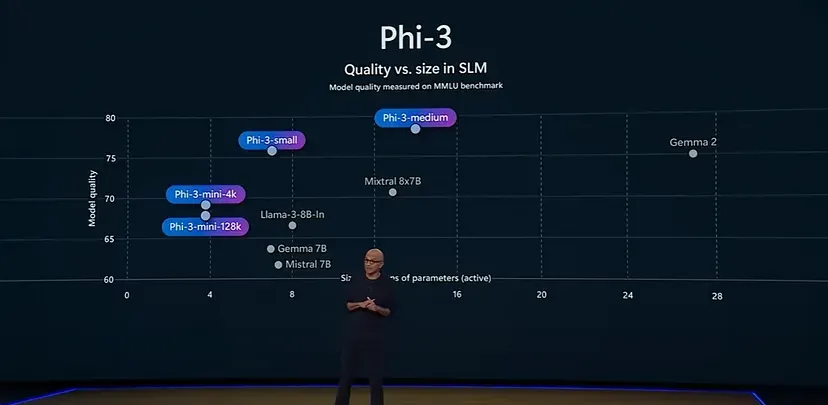

For example, Microsoft’s small language model Phi-3 has been fine tuned specifically for math problems, and can beat larger models like Llama-3 and even Gemma 2 itself in that field.

Gemma 2 is now available in Google AI Studio, with model weights available for download from Kaggle and Hugging Face Models with the powerful Gemini 1.5 Pro available for developers to test it on Vertex AI.

Generally Intelligent Newsletter

A weekly AI journey narrated by Gen, a generative AI model.

Source link

Multicoin Pledges up to $1M for Pro-Crypto Senate Candidates

Crypto heists near $1.4b in first half of 2024: TRM Labs

FTX Founder Sam Bankman-Fried’s Family Accused Of $100M Illicit Political Donation

Bitcoin Price Falls as Mt Gox Starts Repayments

20% Price Drop Follows $87 Million Spending Outrage

More than 10 years since the collapse of Mt. Gox, users confirm reimbursements

Leading Telecom Company Taiwan Mobile Gets Crypto Exchange License

Here Are Price Targets for Bitcoin, Solana, and Render, According to Analyst Jason Pizzino

Bitcoin price plunges below $55k as Mt. Gox announces repayments

Jasmy Sheds 20% Amid Bitcoin Sell-Off

Are they a good thing?

Mt. Gox Transfers $2.7 Billion in Bitcoin From Cold Storage Amid Market Rout

What’s Next For Ethereum (ETH) as Price Hovers $3,000?

Bitcoin’s quick dip below $57k forces beginners to capitulate, CryptoQuant says

$PRDT

Bitcoin Dropped Below 2017 All-Time-High but Could Sellers be Getting Exhausted? – Blockchain News, Opinion, TV and Jobs

What does the Coinbase Premium Gap Tell us about Investor Activity? – Blockchain News, Opinion, TV and Jobs

BNM DAO Token Airdrop

NFT Sector Keeps Developing – Number of Unique Ethereum NFT Traders Surged 276% in 2022 – Blockchain News, Opinion, TV and Jobs

A String of 200 ‘Sleeping Bitcoins’ From 2010 Worth $4.27 Million Moved on Friday

New Minting Services

Block News Media Live Stream

SEC’s Chairman Gensler Takes Aggressive Stance on Tokens – Blockchain News, Opinion, TV and Jobs

Friends or Enemies? – Blockchain News, Opinion, TV and Jobs

Enjoy frictionless crypto purchases with Apple Pay and Google Pay | by Jim | @blockchain | Jun, 2022

How Web3 can prevent Hollywood strikes

Block News Media Live Stream

Block News Media Live Stream

Block News Media Live Stream

XRP Explodes With 1,300% Surge In Trading Volume As crypto Exchanges Jump On Board

Trending

Altcoins2 years ago

Altcoins2 years agoBitcoin Dropped Below 2017 All-Time-High but Could Sellers be Getting Exhausted? – Blockchain News, Opinion, TV and Jobs

Binance2 years ago

Binance2 years agoWhat does the Coinbase Premium Gap Tell us about Investor Activity? – Blockchain News, Opinion, TV and Jobs

- Uncategorized3 years ago

BNM DAO Token Airdrop

BTC1 year ago

BTC1 year agoNFT Sector Keeps Developing – Number of Unique Ethereum NFT Traders Surged 276% in 2022 – Blockchain News, Opinion, TV and Jobs

Bitcoin miners2 years ago

Bitcoin miners2 years agoA String of 200 ‘Sleeping Bitcoins’ From 2010 Worth $4.27 Million Moved on Friday

- Uncategorized3 years ago

New Minting Services

Video2 years ago

Video2 years agoBlock News Media Live Stream

Bitcoin1 year ago

Bitcoin1 year agoSEC’s Chairman Gensler Takes Aggressive Stance on Tokens – Blockchain News, Opinion, TV and Jobs